Invisible light

After getting a new digital camera, I found I didn't use the old one much anymore. They're pretty similar but one's four years older technology. So instead of leaving it to gather dust in the back of my drawer, I sent it off to be converted to shoot infrared! Now it sees people as having waxy skin and foliage as glowing and delicate.

Visible Light |

Invisible Light |

Let's be clear what I'm talking about: The word infrared refers to a wide range of radiation, from the bottom (red) end of the visible spectrum ends at 750 nm, all the way down to 1 mm, at which point people stop calling it infrared and start calling them microwaves. Infrared that's close to visible light ("near infrared") behaves similar to visible light, unsurprisingly. It's emitted only by extremely hot objects (light bulbs, the sun, &c.) and reflects off of opaque objects. The night-vision, thermal-imaging stuff most people think of when they hear the word infrared is mid-infrared, which is emitted by merely warm things, such as humans.

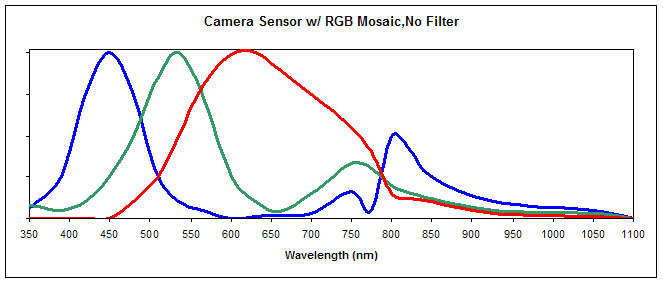

The CCD or CMOS sensors in digital cameras typically respond to visible light and a chunk of near-infrared; about 350nm - 1000 nm. The eye only responds to 350-650nm.

I'd like to point out that the sensor doesn't have any idea what kind of light hit it; it just accumulates a charge and converts that to a single number for each pixel. Color photography is made possible by slapping a mosaic of red, green, and blue filters on top of the sensor; each pixel sees only one color. Software on the camera (or on a computer, if you're saving RAW files) combines this one-color-per-pixel data to output a full-color (e.g., RGB) image.

These red, green, and blue filters have a response curve that looks like this:

Note that this lets some infrared light through. That's not okay; "accurate" color reproduction demands only responding to the same light the eye can see. So the manufacturer sticks another filter on top of the mosaic, one which blocks everything beyond visible light. One that blocks infrared.

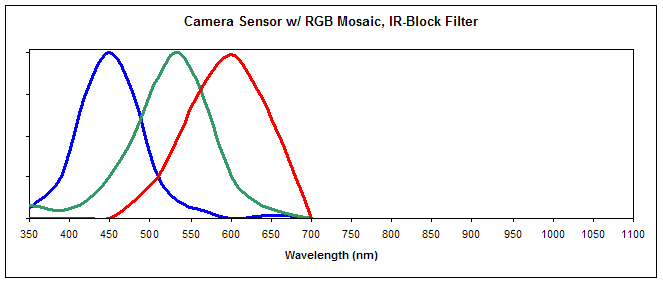

This leaves your pixel response curves looking like this:

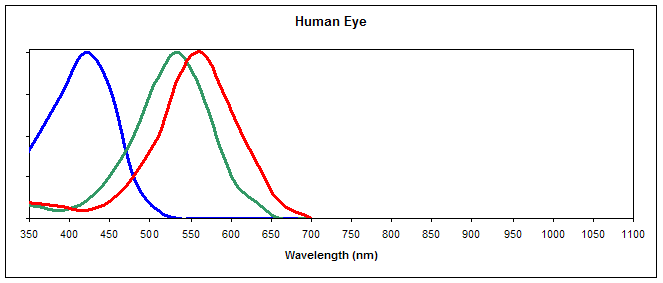

which is deliberately similar to the response spectra for the cones in the human eye:

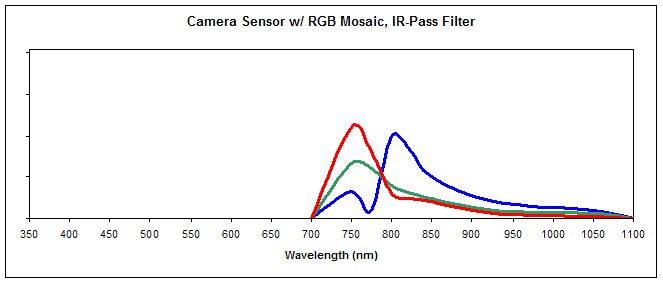

So! If you take off that filter, and replace it with one which blocks visible light but lets infrared through (I used a Hoya R72 filter), now you're seeing like such:

None of the software has any idea that anything's different; it knows that the sensor element under a blue filter got hit by some light, so it puts a bit of blue in the resulting jpeg. Thus we have translated invisible light into a visible image!

False Color

The fact that the red, green, and blue filters have different response curves above the filter's cutoff means different wavelengths of infrared light will trigger different sensor elements in the RGB mosaic. Hey, that's how the camera recognizes regular color! This allows the possibility of generating false-color images; mapping different IR frequencies to different visible colors.

Looking back at the camera's response curve, the red covers a lot more area than the rest of the colors. Indeed, the converted images, without any correction, have a strong reddish cast:

![]()

If I tell the camera a picture of dense foliage is the white point, then it does a little better:

Back to that graph: The red pixels' peak is at a shorter wavelength than the blue and green peaks. This is backwards from normal light. So the sky still looks sepia. If we swap the red and blue channel, all the colors' response curves share similar relationships to the sensitivities of the eye to those colors, and the sky is blue. Tweaking the levels & saturation a bit gets us this:

Things still aren't exactly normal, but that's fine; everything is coming from a whole nother part of the spectrum. Now at least now they're similar enough to look creepy instead of just zany.

This whole process turns these real colors

into these false colors

- Login to post comments

Comments

kick ass.

this was awesome.

with that technology, i'd be tempted to make rave flyers. it seems a natural application.

References